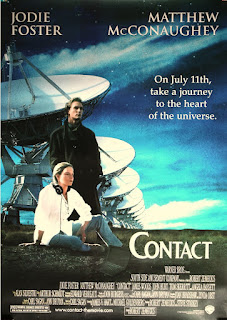

I have watched "Contact" several times and was watching it again the other day. Carl Sagan got a lot of things right in it, including the truth that even scientists have "faith" in matters disconnected with science. But one of the key parts of the film hasn't aged well for me.

- Home

- Angry by Choice

- Catalogue of Organisms

- Chinleana

- Doc Madhattan

- Games with Words

- Genomics, Medicine, and Pseudoscience

- History of Geology

- Moss Plants and More

- Pleiotropy

- Plektix

- RRResearch

- Skeptic Wonder

- The Culture of Chemistry

- The Curious Wavefunction

- The Phytophactor

- The View from a Microbiologist

- Variety of Life

Field of Science

-

-

Change of address10 months ago in Variety of Life

-

Change of address10 months ago in Catalogue of Organisms

-

-

Earth Day: Pogo and our responsibility1 year ago in Doc Madhattan

-

What I Read 20241 year ago in Angry by Choice

-

I've moved to Substack. Come join me there.1 year ago in Genomics, Medicine, and Pseudoscience

-

-

-

-

Histological Evidence of Trauma in Dicynodont Tusks7 years ago in Chinleana

-

Posted: July 21, 2018 at 03:03PM7 years ago in Field Notes

-

Why doesn't all the GTA get taken up?7 years ago in RRResearch

-

-

Harnessing innate immunity to cure HIV9 years ago in Rule of 6ix

-

-

-

-

-

-

post doc job opportunity on ribosome biochemistry!11 years ago in Protein Evolution and Other Musings

-

Blogging Microbes- Communicating Microbiology to Netizens11 years ago in Memoirs of a Defective Brain

-

Re-Blog: June Was 6th Warmest Globally11 years ago in The View from a Microbiologist

-

-

-

The Lure of the Obscure? Guest Post by Frank Stahl13 years ago in Sex, Genes & Evolution

-

-

Lab Rat Moving House14 years ago in Life of a Lab Rat

-

Goodbye FoS, thanks for all the laughs14 years ago in Disease Prone

-

-

Slideshow of NASA's Stardust-NExT Mission Comet Tempel 1 Flyby15 years ago in The Large Picture Blog

-

in The Biology Files

Has Carl Sagan's "Contact" aged well?

When it comes to science, the practical is the moral and the moral the practical

|

| Ignaz Semmelweis |

Complementarity And The World: Niels Bohr’s Message In A Bottle

Steven Weinberg (1933-2021)

I was quite saddened to hear about the passing of Steven Weinberg, perhaps the dominant living figure from the golden age of particle physics. I was especially saddened since he seemed to be doing fine, as indicated by a lecture he gave at the Texas Science Festival this March. I think many of us thought that he was going to be around for at least a few more years.

Weinberg was one of a select few individuals who transformed our understanding of elementary particles and cemented the creation of the Standard Model (he coined the name), in his case by unifying the electromagnetic and weak forces; for this he shared the 1979 Nobel Prize in physics with Abdus Salam and Sheldon Lee Glashow. His 1967 paper which heralded the unification, "A Model of Leptons", was only 3 pages long and remains one of the most highly cited articles in physics history.

But what made Weinberg special was that he was not only one of the most brilliant theoretical physicists of the 20th century but also a pedagogical master with few peers. His many technical textbooks, especially his 3-volume "Quantum Theory of Fields", have educated a generation of physicists; meanwhile, his essays in the New York Times Book Review and other avenues and collections of articles published as popular books have educated the lay public about the mysteries of physics. But in his popular books Weinberg also revealed himself to be a real Renaissance Man, writing not just about physics but about religion, politics, philosophy, history including the history of science, opera and literature. He was also known for his political advocacy of science. Among scientists of his generation, only Freeman Dyson had that kind of range.

There have been some great tributes to him, and I would point especially to the ones by Scott Aaronson and Robert McNees, both of whom interacted with Weinberg as colleagues. The tribute by Scott especially shows the kind of independent streak that Weinberg had, never content to go with the mainstream and always seeking orthogonal viewpoints and original thoughts. In that he very much reminded me of Dyson; the two were in fact friends and served together on the government advisory group JASON, and my conversation with Weinberg which I describe below ended with him asking me to give my regards to Freeman, who I was meeting in a few weeks.

I had the good fortune of interacting with Steve on two occasions, both rewarding. The first time I had the opportunity to be with him on a Canadian television panel on the challenges of Big Science. You can see the discussion here:

https://www.tvo.org/video/the-challenge-of-big-science

The next time was a few years later when I contacted him about a project and asked whether he had some thoughts to share about it. Steve didn't know me personally (although he did remember the Big Science panel) and was even then very busy with writing and other projects. In addition, the project wasn't something close to his immediate interests, so I was surprised when not only did he respond right away but asked me to call him at 10 AM on a Sunday and spoke generously for more than an hour. I still have the recording.

Steve was a great physicist, a gentleman and a Renaissance Man, a true original. We are unlikely to see the likes of him for a long time.

One of the reasons I feel particularly wistful with his passing is because he was among the last of the creators of modern particle physics. He worked in an enormously fruitful time in which theory went hand in hand with experiment. This is different from the last twenty years in which fundamental physics and especially string theory have been struggling to make experimental connections. In cosmology however, there have been very exciting developments, and Weinberg who devoted his last few decades to the topic was certainly very interested in these. Hopefully fundamental physics can become as involved with the productive interplay of theory and experiment as cosmology and condensed matter physics are, and hopefully we can again resurrect the golden era of science in which Steven Weinberg played such a commanding role.

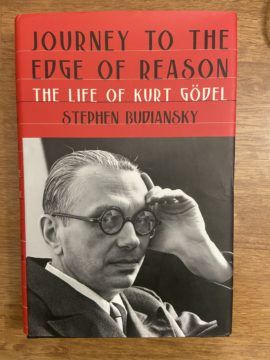

Kurt Gödel's open world

Two men walking in Princeton, New Jersey on a stuffy day. One shaggy-looking with unkempt hair, avuncular, wearing a hat and suspenders, looking like an old farmer. The other an elfin man, trim, owl-like, also wearing a fedora and a slim white suit, looking like a banker. The elfin man and the shaggy man used to make their way home from work every day. Passersby and motorists would strain their heads to look. Everyone knew who the shaggy man was; almost nobody knew who his elfin companion was. And yet when asked, the shaggy man would say that his own work no longer meant much to him, and the only reason he came to work was to have the privilege of walking home with the elfin man. The shaggy man was Albert Einstein. His walking companion was Kurt Gödel.

What made Gödel, a figure unknown to the public, so revered among his colleagues? The superlatives kept coming. Einstein called him the greatest logician since Aristotle. The legendary mathematician John von Neumann who was his colleague argued for his extraction from fascism-riddled Europe, writing a letter to the director of his institute saying that “Gödel is absolutely irreplaceable; he is the only mathematician about whom I dare make this assertion.” And when I made a pilgrimage to Gödel’s house during a trip to his native Vienna a few years ago, the plaque in front of the house made his claim to posterity clear: “In this house lived from 1930-1937, the great mathematician and logician Kurt Gödel. Here he discovered his famous incompleteness theorem, the most significant mathematical discovery of the twentieth century.”

The reason Gödel drew gasps of awe from colleagues as brilliant as Einstein and von Neumann was because he revealed a seismic fissure in the foundations of that most perfect, rational and crystal-clear of all creations – mathematics. Of all the fields of human inquiry, mathematics is considered the most exact. Unlike politics or economics, or even the more quantifiable disciplines of chemistry and physics, every question in mathematics has a definite yes or no answer. The answer to a question such as whether there is an infinitude of prime numbers leaves absolutely no room for ambiguity or error – it’s a simple yes or no (yes in this case). Not surprisingly, mathematicians around the beginning of the 20th century started thinking that every mathematical question that can be posed should have a definite yes or no answer. In addition, no mathematical question should have both answers. The first requirement was called completeness, the second one was called consistency.

The overarching goal of mathematics was to prove completeness and consistency starting from a fundamental, minimal set of axioms, much like Euclid had built up the grand structure of plane geometry starting with a handful of axioms in his marvelous ‘Elements’.

Mathematicians had good reasons to be optimistic. The 19th century had perhaps been the most important for the development of the discipline, solidifying results in analysis, geometry and other key mathematical domains. The mathematical giants of that time, textbooks names like Gauss, Dedekind, Cantor and Riemann, had put mathematics on a solid foundation. It was against this background that Bertrand Russell and Alfred North Whitehead wrote their magnum opus, the dense ‘Principia Mathematica’ that sought to put mathematics on a solid foundation of logic. Unnecessary axioms of mathematics would be discarded, the superstructure trimmed, and mathematics would be put on a sound basis of symbolic logic. One of the major goals of their work was to resolve any paradoxes in mathematics that would lead to statements akin to the famous Liar’s Paradox – “I am lying” that are false when they are true and true when they are false. Russell and Whitehead thought that paradoxes were merely a consequence of not clarifying the axioms and the deductions from them well enough.

The intellectual godfather of the mathematicians was David Hilbert, perhaps the leading mathematician of the first few decades of the twentieth century. In a famous 1900 address at the International Congress of Mathematics in Paris, Hilbert set out 23 open problems in mathematics that he hoped would engage the brightest minds of the next few decades; it is a measure of Hilbert’s perspicacity in picking these problems that some of them are still unsolved and pursued. The second among these problems was to prove the consistency of arithmetic using the kind of axiomatic approach developed by Russell and Whitehead. Hilbert was confident that within a few decades at best, every question in mathematics would have a definite answer that could be built up from the axioms. He famously proclaimed that there would be no ‘ignorabimus’ (a statement whose truth or falsity could never be known) in mathematics. Mathematicians soon began to make themselves busy in carrying out Hilbert’s program.

When Hilbert gave his talk, Kurt Gödel was still six years away from being born. Thirty years later he would drive a wrecking ball into Hilbert’s dream, showing that even this most exact, pristine of all human intellectual endeavors contained truths that are fundamentally undecidable. And he did it in such a final manner that there could be no debate about it. That is what left brilliant men like Einstein and von Neumann with their mouths agape.

Now we have a biography of Gödel and his times written by veteran science and history writer Stephen Budiansky that is the most evocative and comprehensive biography of the logician written so far for a general audience. The book is really about Gödel and his times rather than his work. There have been some fine books on Gödel until now, including the detailed “Incompleteness” by Rebecca Goldstein, the impressionistic “Gödel: A Life in Logic” by John Casti and most notably, John Dawson’s “Logical Dilemmas” which is perhaps the most complete exploration of the man. But Budiansky’s book is the best one so far that situates Gödel in the magical time that was turn of the century Austria-Hungary, a time that was tragically shattered with a totality approaching anything in mathematics by the onslaught of totalitarianism. Budiansky also sensitively investigates Gödel’s dark side; the same mind that could not tolerate anything that was not precisely defined fell prey to its own exacting standards and unleashed demons that would lead to a life punctuated by paranoid delusions and extreme starvation. When Gödel died in 1978, he weighed 65 pounds.

Now we have a biography of Gödel and his times written by veteran science and history writer Stephen Budiansky that is the most evocative and comprehensive biography of the logician written so far for a general audience. The book is really about Gödel and his times rather than his work. There have been some fine books on Gödel until now, including the detailed “Incompleteness” by Rebecca Goldstein, the impressionistic “Gödel: A Life in Logic” by John Casti and most notably, John Dawson’s “Logical Dilemmas” which is perhaps the most complete exploration of the man. But Budiansky’s book is the best one so far that situates Gödel in the magical time that was turn of the century Austria-Hungary, a time that was tragically shattered with a totality approaching anything in mathematics by the onslaught of totalitarianism. Budiansky also sensitively investigates Gödel’s dark side; the same mind that could not tolerate anything that was not precisely defined fell prey to its own exacting standards and unleashed demons that would lead to a life punctuated by paranoid delusions and extreme starvation. When Gödel died in 1978, he weighed 65 pounds.

Gödel’s end was a far cry from his beginning in the glorious years of the Austro-Hungarian empire. The emperor Franz-Joseph, an arch Habsburg, prized order above everything else. The town of Brünn that Gödel was born in was one of the most industrialized towns in the empire, and his father Rudolf was a well-to-do managing director of a textile firm. But it was from his mother Marianne who he was closer to and who was well-versed in music and the arts that Kurt got much of his intellect; throughout his life, Marianne would be a crucial link and lifeline through letters. A brother who was a doctor, Rudi, extended the family. When Gödel was born, the empire was perhaps the foremost fountain of intellect in Europe and possibly the world. In art and philosophy, music and architecture, science and mathematics, Vienna and Budapest led the way. Names like Freud, Wittgenstein, Klimt and Zweig trickled out of the fin de siècle city in a steady stream. They exemplified Vienna and Eastern Europe’s cafe culture, with places like the Café Reichsrat and Café Josephinum becoming battlegrounds of fervent intellectual debate on the deepest questions of epistemology, fueled with marathon shouting matches lasting into the night, strong black coffee topped with whipped cream and scribbles on the marble tabletops.

It was in this heady intellectually milieu that Gödel grew up. He was an outstanding student at the Realgymnasium and showed a meticulous attention to detail that was to be both his biggest strength and his ruin. He was also often the poster child for the head-in-the-clouds intellectual, and throughout his life, as brilliant as his mathematical acumen was, he often remained oblivious to the state of politics around him. A fondness with what many would consider childish preoccupations like children’s toys and kitschy household objects would punctuate his otherwise fanatical commitment to the most abstract reaches of human thought.

Under the facade of Vienna’s intellectual beehive lay a rotten foundation of class and religious inequality, bitterly growing nationalism and, most fatally, anti-Semitism. Viennese Jews had been liberated by Franz Joseph in 1867, and centuries of bottled up ambition and talent in the face of their persecution found a release that led to unprecedented success not just in the practical arts like medicine and law but also in the most abstract realms of mathematics and philosophy. This success bred resentment among Vienna’s growing middle class gentiles. The collective philosophical talent of both Jews and non-Jews culminated in the creation of the famous Vienna Circle and their philosophy of logical positivism. Logical positivism asked to reject anything that could not be rigorously scientifically verified and the philosophers sought to outdo their fellow scientists and place metaphysics on a solid scientific foundation. The philosophers Hans Hahn who was Gödel’s PhD advisor at the University of Vienna and Moritz Schlick were the leaders of the movement; their patron saints were the mysterious, penetrating Ludwig Wittgenstein and Bertrand Russell. Wittgenstein deigned to speak to the circle only once and remained a distant figure, while the philosopher of science Karl Popper tried to become an official member but was spurned.

Into this milieu entered Kurt Gödel, only 24 years old. He became a regular member of the Vienna Circle but spoke up rarely, preferring to instead listen and occasionally interject with a penetrating comment. But even then Gödel’s predilections ran counter to the circle’s. While the circle emphasized the existence only of propositions that could be verified by grounding in the real world, Kurt became a staunch Platonist whose belief that mathematical objects existed in a world of their own without any human intervention only became deeper during his life. For Gödel, numbers, sets and mathematical axioms were as real as planets, bacteria and rocks, simply waiting to be discovered and existing independent of human effort. A large part of this existence stemmed from the sheer beauty of mathematical structures that Gödel and his colleagues were uncovering: how could such beautiful objects exist only under the pre-condition of discovery by ordinary human minds?

By 1930 the Platonist Gödel was ready to drop his bombshell in the world of mathematics and logic. In September 1930, a big conference was going to be organized in Königsberg. German mathematics had been harmed because of Germany’s instigation of the Great War, and Hilbert’s decency and reputation played a big role in resurrecting it. Just before the conference Gödel met with his friend Rudolf Carnap, a founding member of the Vienna Circle in the Cafe Reichsrat. There, perhaps scribbling a bit on the marble table, he told Carnap that he had just showed that Hilbert and Russell’s program to prove the completeness and consistency of mathematics was was fatally flawed. A few days later Gödel delivered his talk at the conference. As often happens with great scientific discoveries, few people understood the significance of what had just happened. The one exception was John von Neumann, a child prodigy and polymath who was known for jumping ten steps ahead of people’s arguments and extending them in ways that their creators could not imagine. Von Neumann buttonholed Gödel, fully understood his result, and then a week later extended it to a startling new domain, only to find through a polite note from Gödel that the former had already done it.

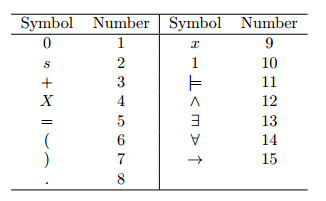

So what had Gödel done? Budiansky’s treatment of Gödel’s proof is light, and I would recommend the 1950s classic “Gödel’s Proof” by Ernest Nagel and James Newman for a semi-popular treatment. Even today Gödel’s seminal paper is comprehensible in its entirety only to specialists in the field. But in a nutshell, what Gödel had found using an ingenious bit of self-referential mapping between numbers and mathematical statements was that any consistent mathematical system that could support the basic axioms of arithmetic as described in Russell and Whitehead’s work would always contain statements that were unprovable. This ingenious scheme included a way of encoding mathematical statements as numbers, allowing numbers to “talk about themselves”. What was worse and even more fascinating was that the axiomatic system of arithmetic would contain statements that were true, but whose truth could not be proven using the axioms of the system – Gödel thus showed that there would always be a statement G in this system which would, like the old Liar’s Paradox, say, “G is unprovable”. If G is true it then becomes unprovable by definition, but if G is false, then it would be provable, thus contradicting itself. Thus, the system would always contain ‘truths’ that are undecidable within the framework of the system. And lest one thought that you could then just expand the system and prove those truths within that new system, Gödel infuriatingly showed that the new system would contain its own unprovable truths, and ad infinitum. This is called the First Incompleteness Theorem.

The Second Incompleteness showed that such a system cannot prove its own consistency, leading to another paradox and in effect saying that any formal system that is interesting enough to prove its own consistency can do so only if it’s inconsistent. This was an even more damning conclusion. Far from getting rid of the paradoxes that Russell and Whitehead believed would be clarified if only one understood the axioms and the deductions from them well enough, Gödel showed that such paradoxes are as foundational a feature of mathematical systems as anything else. As far as Hilbert was concerned, he had uncovered a rotten foundation underlying mathematics that doomed Hilbert’s program forever.

Ironically, just a day after Gödel’s talk, Hilbert gave a speech reinforcing his belief that there would be no ‘ignorabimus’ in mathematics and ending with a famous refrain: “Wir müssen wissen – wir werden wissen.” (“We must know – we will know.”). As sometimes happens when a great mind declares a truth in a scientific discipline with such finality, reactions can range from disbelief and denial to acceptance. Hilbert himself recognized the significance of Gödel’s results but held out hope that they wouldn’t be as far-reaching as they were thought to be. Von Neumann on the other hand is on record saying that after he heard of the incompleteness theorems, he decided to abandon his own productive work in set theory and the foundations of logic and move on to other topics. Gödel’s work had a seismic impact on that of many other thinkers. His proof that a system made up of purely mechanical, axiomatic procedures would contain undecidable propositions inspired Alan Turing’s own answer in the negative to the question of whether a mechanical computer could decide the truth value of an arbitrary proposition in a finite number of steps. Most notably, Gödel’s ingenious scheme of having numbers represent both themselves as well as instructions to specify operations on themselves is, without him ever knowing it, the basis of digital computing.

Thus by the time he was 24 years old, Gödel had established himself as a logician of the first rank and immortalized his name in history. In the next few years his friends and colleagues spread his gospel around the world, most notably in the United States. The noted mathematician Karl Menger was a close friend and was spending a semester in Iowa, sending Gödel periodic letters describing life in America (“Americans as a rule do not go for walks, they think that dashing around in their cars on Sundays is sufficient recreation.”). Not only did Menger give talks about Gödel’s results in the United States, but he performed a crucial service. Helped by a largesse from the brother-sister pair of Louis and Caroline Bamberger who sold their clothing business to Macy’s, the educator Abraham Flexner had established an institute in Princeton dedicated to pure thought, with no administrative and teaching duties. To populate this heavenly tank Flexner had bagged the biggest fish of them all – Albert Einstein. Along with Oswald Veblen, von Neumann and a few others, Einstein became one of the first faculty members at what came to be called the “Institute for Advanced Study”, although given the exorbitant money that Flexner dangled in front of his faculty in addition to the unique work environment, it quickly came to be christened the “Institute for Advanced Salaries”. Menger recommended that Flexner hire Gödel on a temporary basis. He would visit a few more times before permanently relocating in 1940.

By this time, both the personal currents of life and the larger currents of history would steer Gödel’s destiny. In 1927 he had married Adele Porkert, an older woman who lived across from his street. Adele had worked as a nightclub dancer and was a masseuse, qualifications which neither Gödel’s colleagues nor his family considered worthy of his stature. But Adele was to be a true mother to Kurt until her own death. Her role became clear when Gödel started suffering from a kind of psychotic paranoia that would mark him as indelibly as his genius. Starting in the early 1930s, he spent time in sanatoriums, convinced that an apparently weak heart from a bout of rheumatic fever which afflicted him as a child would kill him. More ominously, he started suspecting the sanatorium staff of conspiring to poison him or inject him with lethal substances. He drastically lost weight, and Adele had to feed him food that she had prepared herself to convince him to eat it. In retrospect it is clear that the ultra-logical Gödel also suffered from what we now call obsessive compulsive disorder. He obsessed over his bodily functions, interpreting ordinary signs as signs of trouble – his letters to his mother from America are generously interspersed with accounts of the health of his bowels. Unsurprisingly, this obsession led to a detailed keeping of diaries recording his thoughts and real and perceived symptoms, along with miscellaneous hospital, travel and grocery receipts. It is to Budiansky’s credit that he has combed through these sources to reveal to us the vivid portrait of a methodical, detail-oriented stickler whose very commitment to logic and details would prove to be his undoing.

Political events were also clearly not evolving favorably by the time Gödel first made his way to America. Austrian anti-Semitism had already had a long history, and German-speaking Austrians were fanatically enthusiastic about embracing their former compatriot and army corporal Adolf Hitler. Hitler triumphantly marched into Austria to ecstatic, waving crowds in March 1938 during the Anschluss. But even while Gödel had been proving his famous theorems, the writing had been on the wall. The University of Vienna had been a venue for anti-Semitic demonstrations for a long time, and the Vienna Circle with its Jewish members and commitment to abstract thought and “Jewish science” like relativity was a brightly painted target. In 1936, Johann Nelböck, a mentally troubled former student of Moritz Schlick shot and killed Schlick on the steps of the university, seething under the illusion that Schlick was having an affair with a female student he was obsessed with. Supported by the Nazis and seen as a martyr to the cause of eradicating the foreign element from the body of the Teutonic intellect, Nelböck was sentenced to ten years in prison, only to be promptly released by right-wing authorities in 1938 after the Anschluss. After Schlick’s murder the Vienna Circle effectively dissolved, and with it a glorious intellectual age whose quick demise remains a reminder of how quickly totalitarianism can destroy what takes decades or even centuries to build. After Jewish professors were all dismissed throughout Germany and Austria, Hilbert was asked by the new Nazi minister of education what mathematics was like at the University of Göttingen where he taught. “There is no mathematics anymore at Göttingen”, Hilbert retorted.

Gödel, as involved as he was with the search for mathematical truth, was not finely attuned to what was happening to politics in the country. Two days before Hitler’s takeover of Austria, Menger received a letter about mundane matters of conferences and mathematics from his friend which, as he put it, “may well represent a record for unconcern on the threshold of world-shaking events.” But even Gödel could not ignore what was happening to his colleagues at the university, and after some unpleasant episodes including one in which he was bullied on the streets by Nazi thugs and Adele fended off their taunts with her umbrella, the couple decided to emigrate to America for good. Bureaucratic snafus regarding Gödel’s visa and his new status as a German citizen led to intervention from the director of the Institute for Advanced Study at von Neumann’s goading: that is when von Neumann wrote the remarkable letter urging him to do everything he could to enable Gödel’s emigration, saying that Gödel was absolutely irreplaceable. Because German passengers crossing the Atlantic had to face the dual hazards of Nazi U-boats and potential arrest as enemy aliens by British authorities, Kurt and Adele took the long, scenic route, going through Eastern Europe through Moscow and then taking the Trans-Siberian railroad to Vladivostok, before finally boarding a steamer for San Francisco. Gödel would never leave the Eastern Seaboard of the United States again during his lifetime.

Kurt and Adele arrived in Princeton, a place puckishly described by recent resident Albert Einstein as “a quaint, ceremonial village, full of demigods on stilts”. Gödel had never known Einstein before coming to America, and yet it was Einstein who, along with the Austrian economist Oskar Morgenstern, provided him with the friendship of his Viennese colleagues which he so missed. Einstein and Gödel made for an unlikely pair: the former gregarious, generous, earthy and shabbily dressed, always eyeing the world through a sense of humor; the latter often withdrawn, hyper-logical, critical and unable to lighten up. And yet these exterior differences hid a deep and genuine friendship that went beyond their common background in German culture. Their families often visited each other, and Adele once knitted a woolen vest for Einstein. Animatedly conversing in German during their walks home together, Einstein communed with few others at the institute. It was Einstein who accompanied Gödel and Morgenstern to Gödel’s citizenship ceremony. At the ceremony the overtly pedantic and meticulous Gödel who had studied exhaustively for the citizenship test above and beyond the standard requirements, told the judge that he had found a flaw in the Constitution that would allow the United States to turn into a dictatorship. Einstein and Morgenstern hastily shut him up from saying anything further and the ceremony progressed smoothly.

But the real reason Einstein so admired Gödel was likely because he shared Gödel’s unshakeable belief in the purity of the mathematical constructs governing the universe. Einstein who was not formally religious nevertheless always harbored a deep belief that the laws of physics exist independently of human beings’ abilities to identify and tamper with them – that was one reason he was so uneasy with the then standard interpretation of quantum mechanics which seemed to say that there was no reality independent of observers. Gödel outdid him and went one step further, believing that even numbers and mathematical theorems exist independently of the human mind. It was this almost spiritual and religious belief in the objective nature of mathematical reality that perhaps formed the most intimate bond between the era’s greatest theoretical physicist and its greatest logician. It also helped that Gödel got interested in Einstein’s general theory of relativity, once playing with the equations and startling Einstein by concluding that the theory allowed for the existence of closed timelike curves – in other words, a universe without past and future, without time. For Gödel’s Platonic mind, this kind of result based purely on mathematics and without any physical basis was exactly the kind of absolute mathematical truth he believed in.

Oskar Morgenstern’s friendship with Gödel was even deeper, in part because he outlasted Einstein until Gödel’s own death. Morgenstern who combined worldly wisdom with brilliance in economics had made a name for himself by writing “Theory of Games and Economic Behavior” with von Neumann which established the field of game theory. Morgenstern worried about Gödel’s work, about Gödel’s health and Gödel’s marriage. One of the main sources of Gödel’s life is Morgenstern’s copious, often heartbreaking notes on Gödel’s worries and mental deterioration in his last years. He saw that Adele, while devoted to Kurt, was not a good fit in snobbish Princeton. A young Freeman Dyson vividly described an uncomfortable scene at a party where a very drunk Adele grabbed him and forced him to dance for twenty minutes while Kurt miserably stood by; Dyson could only imagine the horror of their lives. But Adele stayed utterly loyal to Kurt, feeding him, entertaining his paranoid health issues and generally taking good care of him.

After coming to the institute Gödel contributed one significant piece of work that added to the already hallowed place in mathematical history he enjoyed. In his famous 1900 address, the problem Hilbert had put at the top of his list was the so-called Continuum Hypothesis. The hypothesis deals with one of the most startling and deepest aspects of mathematics – a comparison of different kinds of infinity. The fact that there are in fact different kinds of infinity was discovered by Georg Cantor and came as a bombshell. Cantor showed that the “first” kind of infinity, called a countable infinity, was represented by the set of natural numbers. But there was another kind of much larger infinity, an uncountable infinity, represented by the real numbers. It may seem absurd to say that one infinity is larger or smaller than another, but using ingenious arguments Cantor showed that the real numbers cannot have a one-to-one mapping with the natural numbers and are much bigger. The Continuum Hypothesis asked if there is a third kind of infinity between that of the natural numbers and the real numbers.

The problem is still unsolved, but Gödel made a significant dent by showing that the contradiction of the hypothesis could not be proved by standard set theory. This is not the same as showing that the hypothesis is true, but it does result in one strike in favor of it. A bigger advance came in 1963 when mathematician Paul Cohen showed that the hypothesis is independent of standard set theory; that is, either the hypothesis or its negation can be added to standard set theory without destroying its consistency and axioms. For all of Gödel’s scathing remarks and frequent silence about other mathematicians’ work, he was profusely generous toward Cohen when Cohen sent him his proof of the independence of the Continuum Hypothesis, a problem that Gödel himself had tried and failed to solve for more than twenty years.

Gödel’s peculiar obsessions and pedantry made him a difficult colleague, and his promotion of to full professor was held up until 1953 because the faculty feared he would be challenging to deal with when it came to the obligatory administrative matters that full professors had to busy themselves with. Once again von Neumann came to his friend’s rescue, asking, “If Gödel cannot call himself Professor, how can the rest of us?” But even after Gödel got promoted his insecurities did not leave him, and he kept on feeling a mixture of self-pity and suspicions of conspiracy on the part of the institute to demote or fire him. He could nonetheless be a very loyal friend and colleague, testifying against having Oppenheimer removed as director for instance after Oppenheimer’s enemy Lewis Strauss tried to oust him after his infamous security hearing. Especially in his later years, young mathematicians like Martin Davis and Hao Wang observed a Gödel who was friendly, curious and funny.

After Einstein’s death in 1955 and von Neumann’s excruciatingly painful death in 1957, Gödel began to increasingly rely on Adele and Morgenstern (as a measure of how startlingly original his mind remained, in March, 1956, as von Neumann was dying, Gödel sent him a letter that is supposed to contain the first statement of a famous problem in computer science, the P=NP hypothesis). His exalted mind often delighted in the simplest of objects, including trinkets and cheap children’s toys bought from convenience stores. The fear that his colleagues had about his obsession evolved, if anything, in the opposite direction: he would meticulously labor over member applications, exhaustively analyzing them and offering suggestions on points others had missed.

But the spark of genius that had lit the mathematical world on fire seemed to have gone missing. In his last few years, Gödel became obsessed with not just believing that there was a conspiracy against him but also one against a hero of his, the 18th century mathematician and polymath Gottfried Wilhelm Leibniz. He became convinced that there was a plot to keep Leibniz’s work hidden from the world. Beginning in the 1970s, he began to see a psychiatrist whose detailed notes Budiansky opens the book with: “Believes he has been declared incompetent and that one day they will realize he is free and take him away…fear of destitution, loss of position at institute because he hasn’t done anything for past year…brought out delusional ideas, including that brother is the evil person behind plot to destroy him…believes he wants to take his wife, house and position at the institute.” Clearly, having his mother and brother Rudi visit him in America, while welcomed initially, had also turned into a plot to take over his world. It didn’t help that by this time Gödel’s work had been popularized enough that he received the Einstein Prize from Einstein himself , the National Medal of Science from President Gerald Ford and that crowning sign of fame – letters from all over the world from fans and crackpots.

There was little that anyone could do to help. In 1977 Morgenstern himself received a diagnosis of terminal cancer and became paralyzed. His tragic last notes and letters indicate the struggle he was facing as Gödel increasingly came to rely on him, phoning him two or three times every day to communicate his latest worries, even as he himself was facing his own mortality. The last straw was when Adele fell sick and had to spend several months in a hospital. After Morgenstern, she had been his last link to the sane world, and in spite of neighbors and colleagues trying to help out, he stopped eating, convinced that he was being poisoned through his food and, unlike in Vienna in the 1930s, not having Adele around to feed him with tender, loving care. When Adele came back the end was already there, and Gödel entered the hospital for the last time. The cause of death was “malnutrition”, although most people believed that slow suicide was the more likely explanation.

How do we deal with the legacy of someone like Gödel? Philosophically, Gödel’s theorems had such a shattering impact on our thinking because, along with two other groundbreaking ideas of 20th century science – Heisenberg’s Uncertainty Principle and quantum indeterminacy – they revealed that human beings’ ability to divine knowledge of the universe had fundamental limitations. But while Heisenberg and the quantum pioneers found limits to understanding rooted in the physical world, Gödel found these limits even in the rarefied world of pure ideas. Nonetheless, mathematics continued to thrive within the boundaries of his theorems, gathering Fields Medals and revolutionizing new fields like algebraic topology and category theory. The deeper significance of Gödel’s work therefore, as he explained in a lecture, is that it’s hard to avoid a connection between them and a Platonic world of numbers and ideas existing independent of our efforts. This is because if human beings are fundamentally incapable of finding out all the results of axiomatic systems, it means there will always be some results outside the grasp of even our most exalted intellects. In our limitations lies mathematics’s freedom.

But that also says something about human minds and points to a debate still raging – whether the mind itself is some kind of Turing machine. The implication of Gödel’s proof is that if the mind is indeed a machine, it will be subject to the incompleteness theorems and there will always be truths beyond our grasp. If on the other hand, the mind is not a machine, it frees it up from being described through purely mechanistic means. Both choices point to a human mind and a world it inhabits that are “decidedly opposed to materialistic philosophy”. Beyond this possible truth is another one that is purely psychological. We can either feel morose in the face of the fundamental limits to knowledge that Gödel revealed, or we can revel, as the historian George Dyson put it, to “celebrate his proof that even the most rigid numerical bureaucracy contains the tools by which higher truth will always be able to effect an escape.”Gödel offers us an invitation to an open world, a world without end.

But what about the paradoxes of the man himself, someone devoted to the highest reaches of rational thought in the most logical of all fields of inquiry, and still one who seemed to have had an almost mystical belief in the spiritual certainty of mathematics and often gave in to the worst impulses of irrationality? I think a clue comes from Gödel’s obsession with Leibniz in his last few years. Leibniz was convinced that this is the best of all possible worlds, because that is the only thing a just God could have created. Like his fellow philosophers and mathematicians, Leibniz was religious and saw no contradictions between science and faith, between teasing out the truths of the world rationally and believing in a hereafter. A few years before his mother Marianne’s death in 1961, Kurt wrote to her in a letter his belief that a God probably exists: “For what kind of sense would there be in bringing forth a creature (man), who has such a broad range of possibilities of his own development and of relationships, and then not allow him to achieve 1/1000 of it?” Like his fellow philosopher Leibniz, Kurt Gödel could perfectly reconcile the rational and the transcendental. In doing this, he proved himself to be much more at home in the 18th century than the 20th. Perhaps that vision of a reconciliation between rational thought and seemingly irrational human frailty and belief will be, even more than his seminal mathematical discoveries, his enduring legacy.